- Joined

- Jan 8, 2019

- Messages

- 96

...as my 6yo niece says any time I enter the room, Nerd Alert.

We pay a ton of attention to Afflift follow-alongs and this feature was inspired by you all right here in this forum, especially @Luke. Given how much guesswork we see going on we figured it was time to incorporate statistical significance into our tracker's reports; specifically when deciding what to cut, boost, run and when.

We are curious how many of you, if any, currently export report data from your tracker in order to make informed boost/cut/run decisions based on confidence intervals.

In super-affiliate land it's quite common (not sure if you've seen any of the incredibly complex boost/cut/run spreadsheets out there) so we decided to do a metric ton of math to create inline calculations of traffic source token (i.e. pub/widget/etc) "confidence" reports.

We are working on a free tool to allow people to calculate these using whatever tracker they use. Unless you already use Kintura, go to the calculator linked below and enter Denominator: [number of visits], Numerator: [number of conversions] and click Compute. The resulting Interval around Proportion will tell you, with 95% confidence, a lower and upper range of how future traffic will convert:

http://statpages.info/confint.html

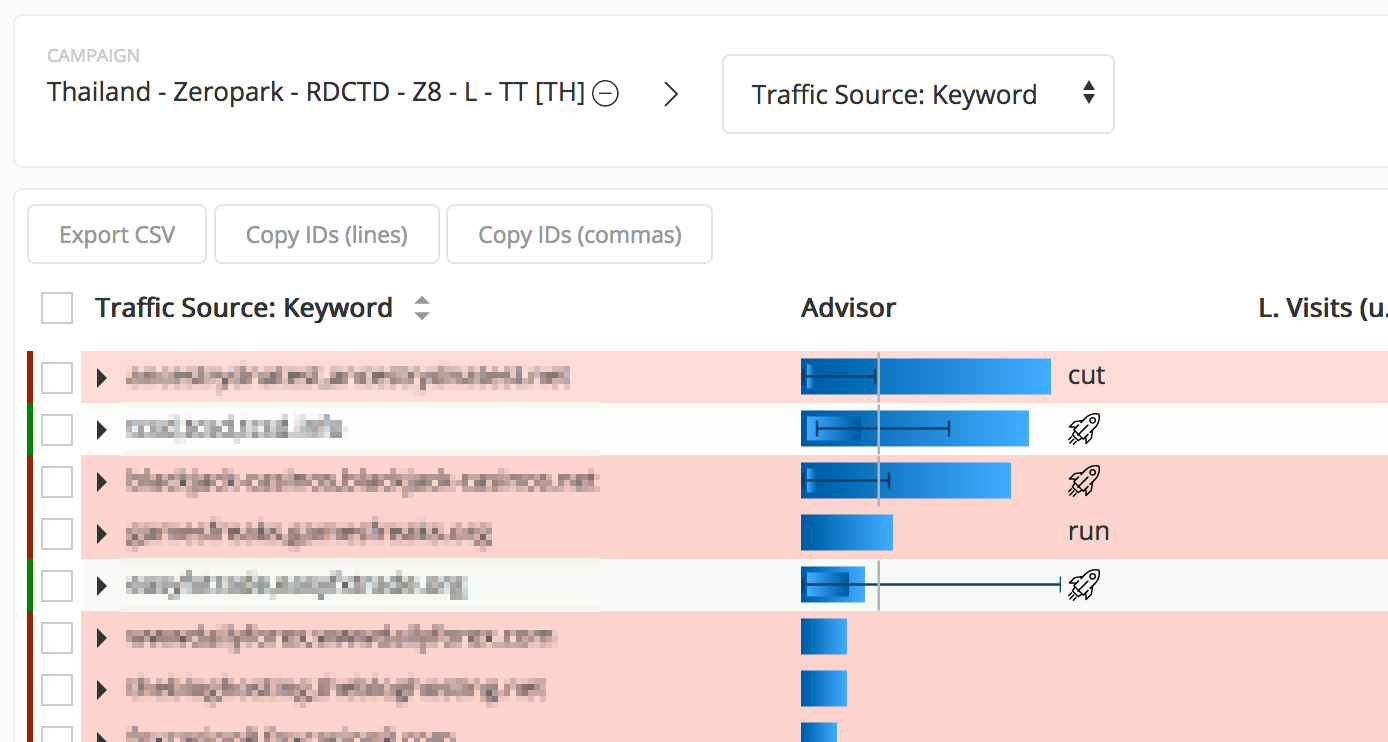

Below is a real example with traffic from Zeropark tracked in our tracker, Kintura. In the Advisor column, the vertical grey bar represents the minimum viable conversion rate for this keyword to avoid being cut based on a desired minimum ROI of 40% (on this campaign). The dark blue error bars indicate probable conversion rate based on future traffic (using 95% confidence). As you can see, when the upper error bar falls below the minimum viable conversion rate it's a cut. (Please keep in mind this is very early testing phase in this campaign...and also Zeropark is awesome)

What makes this example great is that it shows you two instances where you might have otherwise cut the keyword but there wasn't enough data to make a truly informed decision based on statistical significance. I'm going to stop blabbing and wait for questions if you have them.

We pay a ton of attention to Afflift follow-alongs and this feature was inspired by you all right here in this forum, especially @Luke. Given how much guesswork we see going on we figured it was time to incorporate statistical significance into our tracker's reports; specifically when deciding what to cut, boost, run and when.

We are curious how many of you, if any, currently export report data from your tracker in order to make informed boost/cut/run decisions based on confidence intervals.

In super-affiliate land it's quite common (not sure if you've seen any of the incredibly complex boost/cut/run spreadsheets out there) so we decided to do a metric ton of math to create inline calculations of traffic source token (i.e. pub/widget/etc) "confidence" reports.

We are working on a free tool to allow people to calculate these using whatever tracker they use. Unless you already use Kintura, go to the calculator linked below and enter Denominator: [number of visits], Numerator: [number of conversions] and click Compute. The resulting Interval around Proportion will tell you, with 95% confidence, a lower and upper range of how future traffic will convert:

http://statpages.info/confint.html

Below is a real example with traffic from Zeropark tracked in our tracker, Kintura. In the Advisor column, the vertical grey bar represents the minimum viable conversion rate for this keyword to avoid being cut based on a desired minimum ROI of 40% (on this campaign). The dark blue error bars indicate probable conversion rate based on future traffic (using 95% confidence). As you can see, when the upper error bar falls below the minimum viable conversion rate it's a cut. (Please keep in mind this is very early testing phase in this campaign...and also Zeropark is awesome)

What makes this example great is that it shows you two instances where you might have otherwise cut the keyword but there wasn't enough data to make a truly informed decision based on statistical significance. I'm going to stop blabbing and wait for questions if you have them.